- Main

- Computers - Programming

- Hands-On Large Language Models (for...

Hands-On Large Language Models (for True Epub)

Jay Alammar, Maarten Grootendorst你有多喜欢这本书?

下载文件的质量如何?

下载该书,以评价其质量

下载文件的质量如何?

AI has acquired startling new language capabilities in just the past few years. Driven by the rapid advances in deep learning, language AI systems are able to write and understand text better than ever before. This trend enables the rise of new features, products, and entire industries. With this book, Python developers will learn the practical tools and concepts they need to use these capabilities today.

You'll learn how to use the power of pretrained large language models for use cases like copywriting and summarization; create semantic search systems that go beyond keyword matching; build systems that classify and cluster text to enable scalable understanding of large numbers of text documents; and use existing libraries and pretrained models for text classification, search, and clusterings.

This book also shows you how to:

Build advanced LLM pipelines to cluster text documents and explore the topics they belong to

Build semantic search engines that go beyond keyword search with methods like dense retrieval and rerankers

Learn various use cases where these models can provide value

Understand the architecture of underlying Transformer models like BERT and GPT

Get a deeper understanding of how LLMs are trained

Optimize LLMs for specific applications with methods such as generative model fine-tuning, contrastive fine-tuning, and in-context learning

You'll learn how to use the power of pretrained large language models for use cases like copywriting and summarization; create semantic search systems that go beyond keyword matching; build systems that classify and cluster text to enable scalable understanding of large numbers of text documents; and use existing libraries and pretrained models for text classification, search, and clusterings.

This book also shows you how to:

Build advanced LLM pipelines to cluster text documents and explore the topics they belong to

Build semantic search engines that go beyond keyword search with methods like dense retrieval and rerankers

Learn various use cases where these models can provide value

Understand the architecture of underlying Transformer models like BERT and GPT

Get a deeper understanding of how LLMs are trained

Optimize LLMs for specific applications with methods such as generative model fine-tuning, contrastive fine-tuning, and in-context learning

年:

2024

出版社:

O'Reilly Media, Inc.

语言:

english

文件:

PDF, 10.53 MB

您的标签:

IPFS:

CID , CID Blake2b

english, 2024

在1-5分钟内,文件将被发送到您的电子邮件。

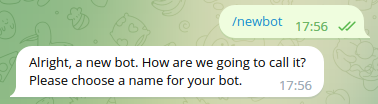

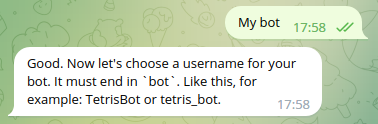

该文件将通过电报信使发送给您。 您最多可能需要 1-5 分钟才能收到它。

注意:确保您已将您的帐户链接到 Z-Library Telegram 机器人。

该文件将发送到您的 Kindle 帐户。 您最多可能需要 1-5 分钟才能收到它。

请注意:您需要验证要发送到Kindle的每本书。检查您的邮箱中是否有来自亚马逊Kindle的验证电子邮件。

正在转换

转换为 失败

关联书单

Amazon

Amazon  Barnes & Noble

Barnes & Noble  Bookshop.org

Bookshop.org  转换文件

转换文件 更多搜索结果

更多搜索结果 其他特权

其他特权