- Main

- Computers - Computer Science

- Strengthening Deep Neural Networks:...

Strengthening Deep Neural Networks: Making AI Less Susceptible to Adversarial Trickery

Katy Warr你有多喜欢这本书?

下载文件的质量如何?

下载该书,以评价其质量

下载文件的质量如何?

As deep neural networks (DNNs) become increasingly common in real-world applications, the potential to deliberately "fool" them with data that wouldn’t trick a human presents a new attack vector. This practical book examines real-world scenarios where DNNs—the algorithms intrinsic to much of AI—are used daily to process image, audio, and video data.

Author Katy Warr considers attack motivations, the risks posed by this adversarial input, and methods for increasing AI robustness to these attacks. If you’re a data scientist developing DNN algorithms, a security architect interested in how to make AI systems more resilient to attack, or someone fascinated by the differences between artificial and biological perception, this book is for you.

• Delve into DNNs and discover how they could be tricked by adversarial input

• Investigate methods used to generate adversarial input capable of fooling DNNs

• Explore real-world scenarios and model the adversarial threat

• Evaluate neural network robustness; learn methods to increase resilience of AI systems to adversarial data

• Examine some ways in which AI might become better at mimicking human perception in years to come

Author Katy Warr considers attack motivations, the risks posed by this adversarial input, and methods for increasing AI robustness to these attacks. If you’re a data scientist developing DNN algorithms, a security architect interested in how to make AI systems more resilient to attack, or someone fascinated by the differences between artificial and biological perception, this book is for you.

• Delve into DNNs and discover how they could be tricked by adversarial input

• Investigate methods used to generate adversarial input capable of fooling DNNs

• Explore real-world scenarios and model the adversarial threat

• Evaluate neural network robustness; learn methods to increase resilience of AI systems to adversarial data

• Examine some ways in which AI might become better at mimicking human perception in years to come

年:

2019

出版:

1

出版社:

O’Reilly Media

语言:

english

页:

246

ISBN 10:

1492044954

ISBN 13:

9781492044956

文件:

PDF, 32.55 MB

您的标签:

IPFS:

CID , CID Blake2b

english, 2019

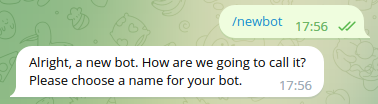

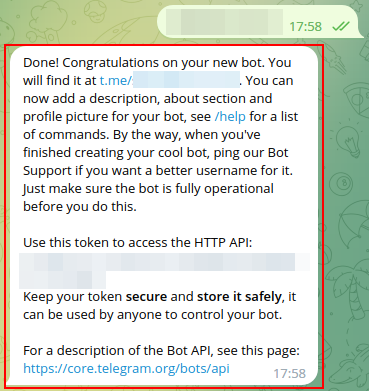

在1-5分钟内,文件将被发送到您的电子邮件。

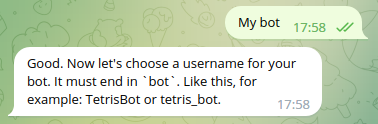

该文件将通过电报信使发送给您。 您最多可能需要 1-5 分钟才能收到它。

注意:确保您已将您的帐户链接到 Z-Library Telegram 机器人。

该文件将发送到您的 Kindle 帐户。 您最多可能需要 1-5 分钟才能收到它。

请注意:您需要验证要发送到Kindle的每本书。检查您的邮箱中是否有来自亚马逊Kindle的验证电子邮件。

正在转换

转换为 失败

关键词

关联书单

Amazon

Amazon  Barnes & Noble

Barnes & Noble  Bookshop.org

Bookshop.org  转换文件

转换文件 更多搜索结果

更多搜索结果 其他特权

其他特权